Most AI model releases follow a familiar pattern: bigger numbers on benchmarks, a new version suffix, a press release. NVIDIA’s Nemotron 3 Super, released on 11 March 2026, is different in ways that matter for how businesses think about deploying AI at scale.

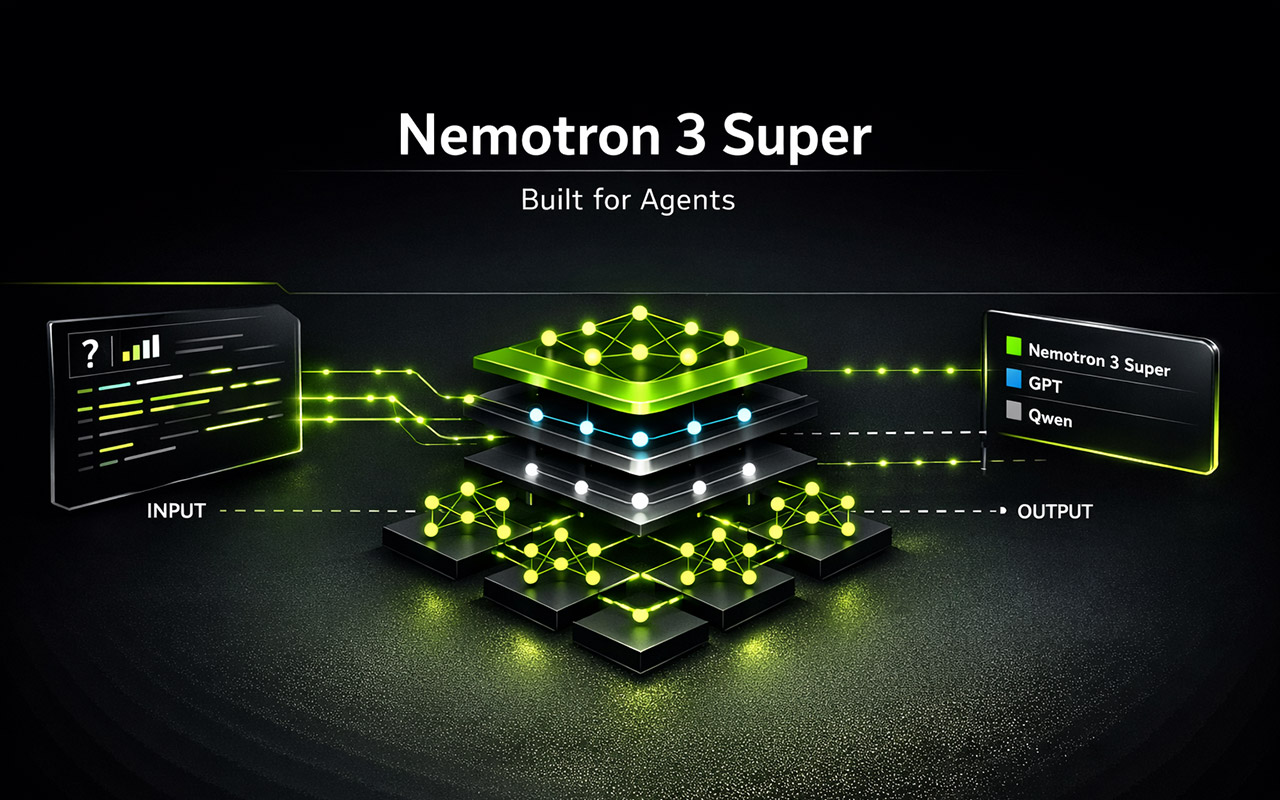

This isn’t primarily a model for answering questions or writing copy. It’s specifically engineered for agentic AI — systems where an AI works autonomously through multi-step tasks, calling tools, writing and executing code, managing long workflows, and coordinating with other AI agents. That distinction is important, and it shapes everything about how the model was designed.

At 120 billion total parameters but only 12 billion active at any given moment, Nemotron 3 Super is built to solve two problems that make agentic AI genuinely hard to deploy in production: the cost of running a large model continuously across long tasks, and the tendency of AI agents to lose track of what they were originally trying to do. Here’s a clear breakdown of what’s new, what the benchmark numbers mean, and what it signals for businesses building or evaluating AI automation in Singapore and across Southeast Asia.

The Two Problems Nemotron 3 Super Is Built to Solve

Problem 1: The Thinking Tax

When you build a multi-agent system — multiple AI models coordinating to complete a complex task — the token volumes involved are dramatically higher than in standard chat applications. NVIDIA estimates that multi-agent systems generate up to 15 times the tokens of a typical conversation, because agents must re-send context, tool outputs, and reasoning steps at each turn.

The obvious solution of using the most powerful reasoning model for every sub-task is what NVIDIA calls the thinking tax: using a heavyweight model for simple steps makes the overall system too slow and expensive for practical deployment. You need a model that’s genuinely capable on complex tasks but efficient enough to run continuously.

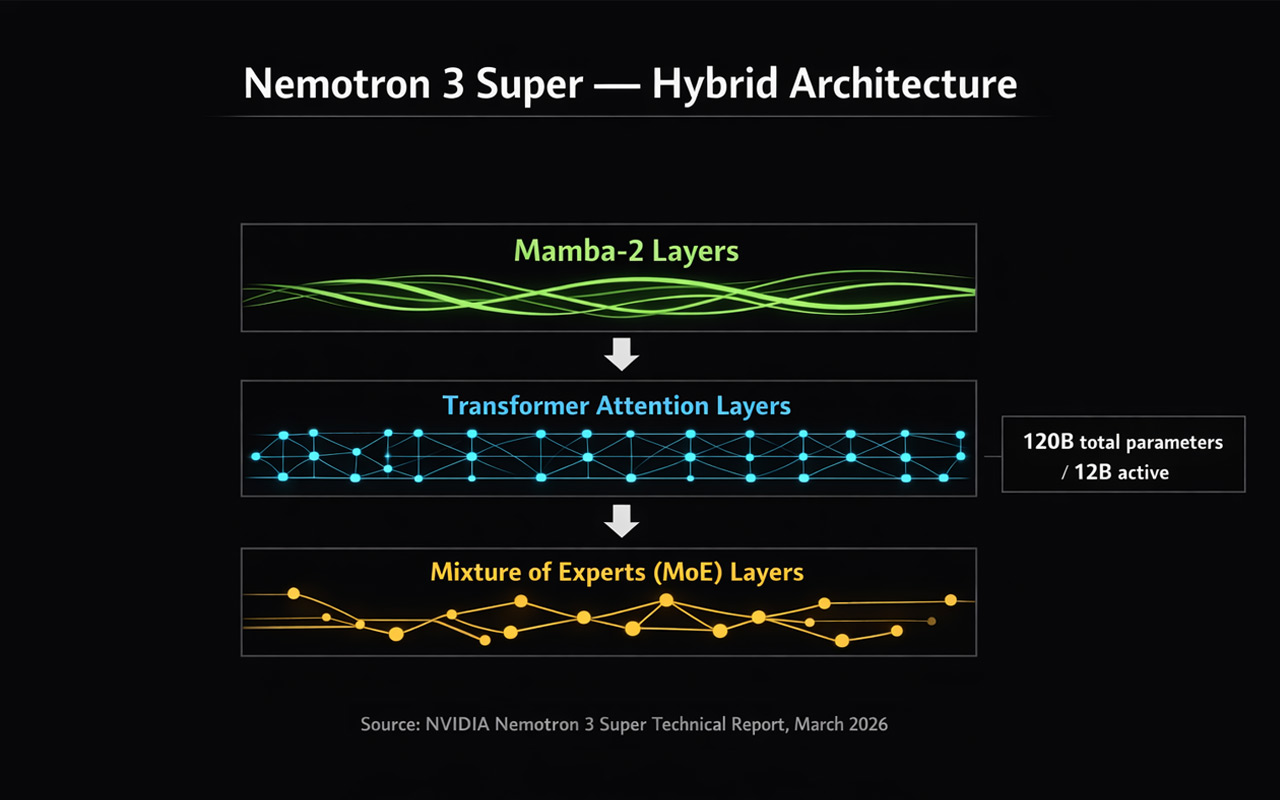

Nemotron 3 Super addresses this with a hybrid mixture-of-experts (MoE) architecture. Despite having 120 billion total parameters, only 12 billion are active at any moment — the model routes each token to a relevant subset of specialist modules rather than running the full parameter set every time. The result is over 5x throughput improvement versus the previous Nemotron Super, while maintaining accuracy on complex tasks.

Problem 2: Context Explosion and Goal Drift

The second problem is subtler but equally serious for production agentic deployments. As an agent works through a long task — processing a large codebase, analysing a stack of documents, maintaining a multi-hour workflow — the sheer volume of context it must track grows rapidly. Most transformer-based models struggle here: their context handling becomes less precise as the window fills, and agents gradually lose alignment with the original objective. NVIDIA calls this goal drift.

Nemotron 3 Super tackles this with a native 1 million token context window, made practical by its hybrid Mamba-Transformer backbone. Mamba layers handle the majority of sequence processing using state space models (SSMs), which have linear rather than quadratic complexity with respect to sequence length. This is what makes a 1 million token context window achievable without prohibitive memory requirements — not just a theoretical maximum but something agents can actually use in practice.

What Makes the Architecture Different

Hybrid Mamba-Transformer Backbone

Rather than using a pure transformer architecture, Nemotron 3 Super interleaves three distinct layer types, each handling what it does best.

Mamba-2 layers handle the bulk of sequence processing. Because SSMs scale linearly rather than quadratically with sequence length, they’re what makes the 1M token context window practical. When an agent needs to reason over an entire codebase or a long document history, Mamba layers keep the memory footprint manageable.

Transformer attention layers are placed at key depths within the architecture. Pure SSMs can struggle with precise associative recall — finding one specific fact in a long context when conflicting information surrounds it. The transformer layers preserve this capability, ensuring high-fidelity retrieval even in dense, noisy contexts.

MoE layers scale the effective parameter count without the cost of dense computation. Only a subset of expert modules activates per token, keeping latency low even when many agents run concurrently on shared infrastructure.

Latent MoE — More Experts, Same Cost

Standard MoE architectures route tokens directly from the model’s full hidden dimension to expert modules. As models grow, this routing step becomes a bottleneck. Nemotron 3 Super introduces latent MoE: token embeddings are compressed into a low-rank latent space before routing decisions are made. Expert computation happens in this smaller dimension, and results are projected back afterward.

In practice, this means the model can consult 4 times as many specialists for the exact same computational cost as routing to one. In agentic settings where a single workflow might span tool calls, code generation, data analysis, and reasoning within a few turns, this granularity of specialisation is genuinely valuable — distinct experts can be activated for Python syntax versus SQL logic versus financial calculation, only when strictly needed.

Multi-Token Prediction

Most language models are trained to predict one token at a time. Nemotron 3 Super uses multi-token prediction (MTP), where specialised prediction heads forecast several future tokens simultaneously from each position.

This has two concrete effects. During training, predicting multiple future tokens forces the model to internalise longer-range logical dependencies — it must learn coherent sequences rather than just plausible next words. At inference, MTP enables built-in speculative decoding, where multiple future tokens are generated in one forward pass and verified in parallel. NVIDIA reports up to 3x wall-clock speedups for structured generation tasks like code and tool calls, without requiring a separate draft model.

Native NVFP4 Pretraining

Most quantised models start at full precision and get compressed after training — which introduces accuracy loss. Nemotron 3 Super is pretrained natively in NVFP4, NVIDIA’s 4-bit floating-point format optimised for Blackwell GPUs. The model learns to be accurate within 4-bit arithmetic constraints from the very first gradient update, rather than adapting to them after the fact.

NVIDIA reports 4x faster inference on NVIDIA B200 compared to FP8 on H100, while maintaining accuracy. For businesses running AI inference at scale, this has direct cost implications — the same workload on newer hardware runs significantly faster and cheaper.

How It Was Trained

Nemotron 3 Super’s training pipeline runs in three sequential phases, each building on the last.

Pretraining ran on 25 trillion tokens using NVFP4, with a corpus of 10 trillion unique curated tokens seen multiple times across the run, plus additional compute focused on reasoning and coding tasks. The model builds broad world knowledge and language understanding at this stage.

Supervised fine-tuning followed, using approximately 7 million samples drawn from a broader pool of 40 million covering reasoning, instruction following, coding, safety, and multi-step agent tasks. This shapes the model’s behaviour across the task types it will encounter in deployment and gives the subsequent reinforcement learning phase a stable starting point.

Reinforcement learning then refines behaviour against verifiable outcomes across 21 environment configurations using NVIDIA’s NeMo Gym and NeMo RL frameworks. These aren’t single-turn question-answer tasks — they evaluate the model’s ability to perform sequences of actions, generate correct tool calls, write functional code, and produce multi-part plans that satisfy verifiable criteria. The RL phase generated approximately 1.2 million environment rollouts during training.

This trajectory-based reinforcement learning approach is what produces a model that behaves reliably under multi-step workflows, reduces reasoning drift, and handles the structured operations common in real agentic pipelines — as opposed to a model that performs well on static benchmarks but degrades under long autonomous runs.

The Benchmark Numbers

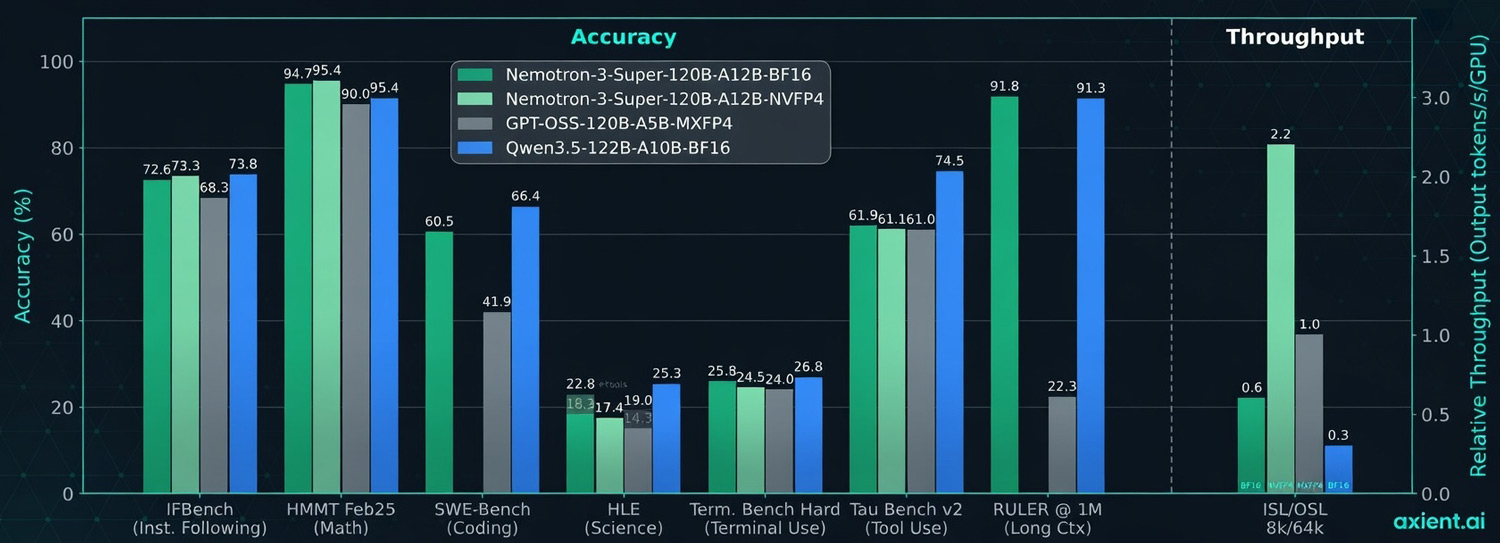

Nemotron 3 Super’s benchmark results are strong, particularly on the agentic and tool-use tasks it was designed for. The numbers below compare it against Qwen 3.5 122B, a similarly sized open model.

| Benchmark | Nemotron 3 Super | Qwen 3.5 122B | What it tests |

|---|---|---|---|

| IFBench (Instruction Following) | 94.4% | 73.4% | Following complex instructions |

| HMMT Feb25 (Math) | 90.4% | 46.3% | Advanced mathematics |

| SWE-Bench (Coding) | 60.5% | 41.9% | Real software engineering tasks |

| HLE (Science) | 66.4% | 26.8% | Expert-level science reasoning |

| Tau Bench v2 (Tool Use) | 91.8% | 22.3% | Real-world tool calling |

| RULER @ 1M (Long Context) | 91.3% | — | 1 million token context recall |

The Tau Bench v2 result — 91.8% on tool use, against 22.3% for Qwen — is the most striking. Tool use is directly relevant to agentic deployment: it measures how reliably a model calls the right tools with the right parameters across multi-step tasks. A model that scores 91.8% on this in testing is meaningfully more deployable in production agentic workflows than one scoring 22.3%.

The RULER @ 1M result (91.3%) confirms that the 1 million token context window is functional, not just nominal — the model maintains high recall accuracy across a full million-token context, which is what matters for agents working across large codebases or long document collections.

The Super + Nano Deployment Pattern

One of the more practically useful ideas in the NVIDIA announcement is the Super + Nano deployment pattern — a tiered architecture for agentic systems that matches model capacity to task complexity.

Nemotron 3 Nano (released in December 2025) handles targeted, individual steps within a workflow — the simple, high-frequency tasks where speed and cost matter more than depth. Nemotron 3 Super handles the complex, multi-step tasks that require deeper planning and reasoning. For the most demanding expert-level work, proprietary frontier models like GPT-5.4 or Claude can be called in.

In software development, for example: simple merge requests go to Nano, complex codebase analysis and multi-file refactoring go to Super, and tasks requiring frontier-level reasoning can be escalated to a proprietary model. This tiered approach is how businesses can build production agentic systems that are both capable and economically viable — not routing everything through the most expensive model available.

This mirrors a pattern we’ve seen emerging across the industry. We wrote recently about GPT-5.4’s tool search capability, which similarly reduces the cost of running large models across complex tool ecosystems. The direction is consistent: agentic AI is becoming more modular, more tiered, and more practically deployable — not just more capable in isolation.

Fully Open — What That Means in Practice

Nemotron 3 Super is fully open: weights, datasets, and training recipes are all publicly available. This is significant for businesses in Southeast Asia where data sovereignty, privacy, and on-premise deployment are often hard requirements.

Full model weights are available on Hugging Face and through NVIDIA NIM. The NVIDIA Nemotron Open Model License gives enterprises the flexibility to deploy on their own infrastructure, maintaining full data control. Ready-to-use deployment cookbooks cover vLLM, SGLang, and NVIDIA TensorRT LLM. Fine-tuning cookbooks cover LoRA/SFT for domain adaptation and GRPO/DAPO for advancing agentic reasoning capabilities.

For businesses in Singapore and across Southeast Asia that need to customise AI models for local languages, domain-specific knowledge, or compliance requirements, the availability of full weights and training recipes is meaningfully different from API-only access to a proprietary model. You can adapt it, fine-tune it, and run it entirely within your own infrastructure.

What This Means for Businesses in Southeast Asia

Nemotron 3 Super is a technical release aimed primarily at developers building agentic systems. But the implications for business AI strategy are real, and worth thinking through clearly.

- undefined The combination of efficient architecture, 1M token context, and strong tool-use performance addresses the specific problems that have made agentic deployments fragile in practice. If you’ve evaluated agentic AI before and found it too slow, too expensive, or too prone to losing the thread — the landscape is shifting.

- Open weights change the calculus for regulated industries. Finance, healthcare, and legal sectors in Singapore, Jakarta, and Kuala Lumpur operate under data governance requirements that make API-only AI tools complicated to use. A fully open model with enterprise licensing can be deployed on-premise, fine-tuned on proprietary data, and audited end-to-end.

- The tiered deployment model is the right framework for cost-effective AI automation. Not every step in a workflow requires a frontier model. Businesses that architect their AI systems with task-appropriate model tiers will achieve significantly better economics than those routing everything through a single heavyweight model.

- The coding and software development use cases are particularly mature. SWE-Bench (real software engineering tasks), IFBench (instruction following), and the terminal use benchmark all show strong performance. For technology companies and in-house engineering teams in Southeast Asia evaluating AI-assisted development tools, Nemotron 3 Super is worth a direct evaluation.

We’ve also recently covered Anthropic’s research on AI’s actual labour market impact — which found that deployment is currently far behind theoretical capability. Nemotron 3 Super is one of several releases in early 2026 that is actively closing that gap, particularly in the agentic and autonomous workflow categories where the deployment barrier has historically been highest.

At Axient.ai, we help businesses across Singapore, Jakarta, Bangkok, and Kuala Lumpur evaluate and deploy AI tools that are genuinely ready for production — including agentic systems where the architecture decisions made now will determine long-term performance and cost.